Scientific researchers push for sharing data and greater transparency in experiments

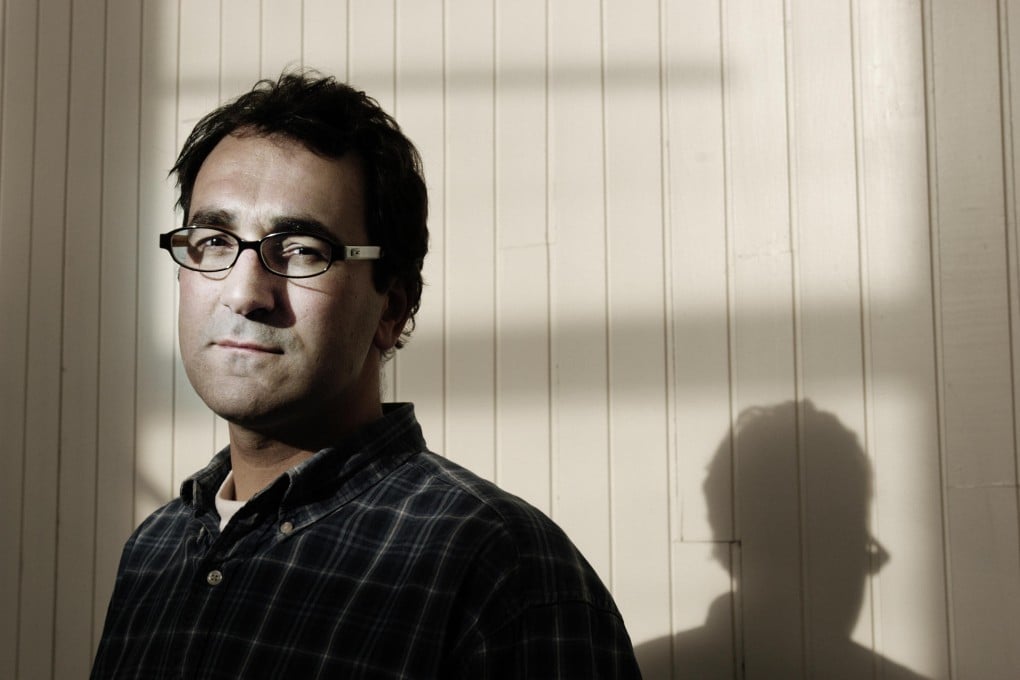

Diederik Stapel, a professor of social psychology in the Netherlands, had been a rock-star scientist. Among his striking discoveries was that people exposed to litter and abandoned objects are more likely to be bigoted.

Diederik Stapel, a professor of social psychology in the Netherlands, had been a rock-star scientist - regularly appearing on television and publishing in top journals. Among his striking discoveries was that people exposed to litter and abandoned objects are more likely to be bigoted.

And yet there was often something odd about his research. When students asked to see his data, he couldn't produce it readily. Colleagues would sometimes look at his data and think: It's beautiful. Too beautiful. Most scientists have messy data, contradictory data, incomplete data, ambiguous data. This data was too good to be true.

Then, in late 2011, Stapel admitted that he'd been fabricating data for many years.

The Stapel case was an outlier, an extreme example of scientific fraud. But this and several other high-profile cases of misconduct resonated in the scientific community because of a much broader, more pernicious problem: Too often, experimental results can't be reproduced.

That doesn't mean the results are fraudulent or even wrong. But in science, a result is supposed to be verifiable by a subsequent experiment. An irreproducible result is inherently squishy.

And so there's a movement afoot, and building momentum rapidly. Top-tier journals, such as Science and Nature, have announced new guidelines for the research they publish.

"We need to go back to basics," said Ritu Dhand, the editorial director of the Nature group of journals. "We need to train our students over what is okay and what is not okay, and not assume that they know."