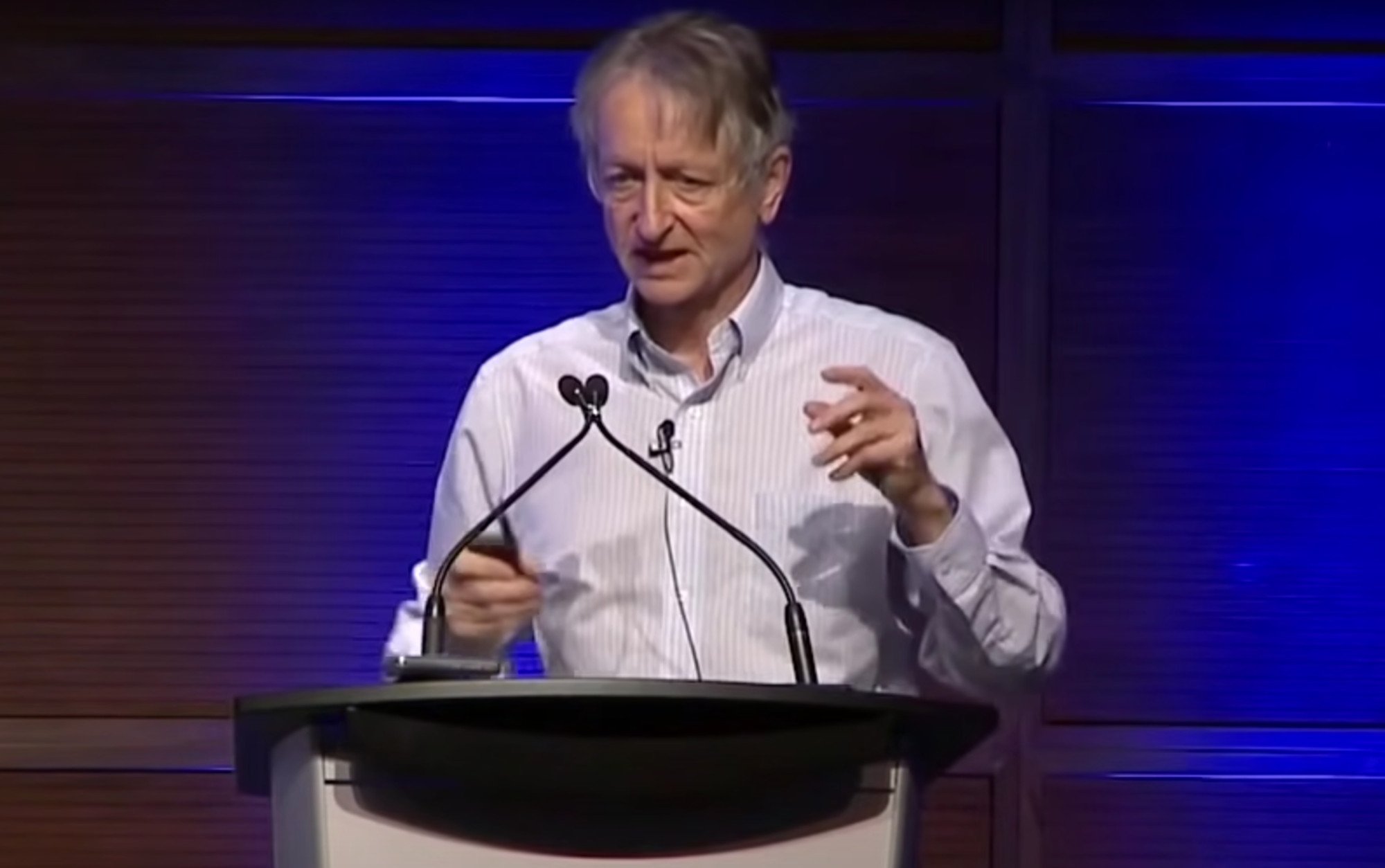

‘Godfather of AI’ Geoffrey Hinton quits Google to warn the world about dangers posed by the technology

- Computer scientist Geoffrey Hinton said the latest advances in artificial intelligence posed ‘profound risks to society and humanity’

- He said that competition between tech giants was pushing new AI technologies at dangerous speeds, risking jobs and spreading misinformation

“Look at how it was five years ago and how it is now,” he was quoted as saying in the piece, which was published on Monday.

“Take the difference and propagate it forwards. That’s scary.”

Hinton said that competition between tech giants was pushing companies to release new AI technologies at dangerous speeds, risking jobs and spreading misinformation.

“It is hard to see how you can prevent the bad actors from using it for bad things,” he told the Times.

In 2022, Google and OpenAI – the start-up behind the popular AI chatbot ChatGPT – started building systems using much larger amounts of data than before.

Hinton told the Times he believed that these systems were eclipsing human intelligence in some ways because of the amount of data they were analysing.

“Maybe what is going on in these systems is actually a lot better than what is going on in the brain,” he told the paper.

While AI has been used to support human workers, the rapid expansion of chatbots like ChatGPT could put jobs at risk.

AI “takes away the drudge work”, but “might take away more than that”, he told the Times.

The scientist also warned about the potential spread of misinformation created by AI, indicating that the average person will “not be able to know what is true any more”.

Hinton notified Google of his resignation last month, according to the Times report.

Jeff Dean, lead scientist for Google AI, thanked Hinton in a statement to US media.

“As one of the first companies to publish AI Principles, we remain committed to a responsible approach to AI,” the statement added.

“We’re continually learning to understand emerging risks while also innovating boldly.”

Hinton did not sign that letter at the time, but told the Times that scientists should not “scale this up more until they have understood whether they can control it”.

.png?itok=arIb17P0)