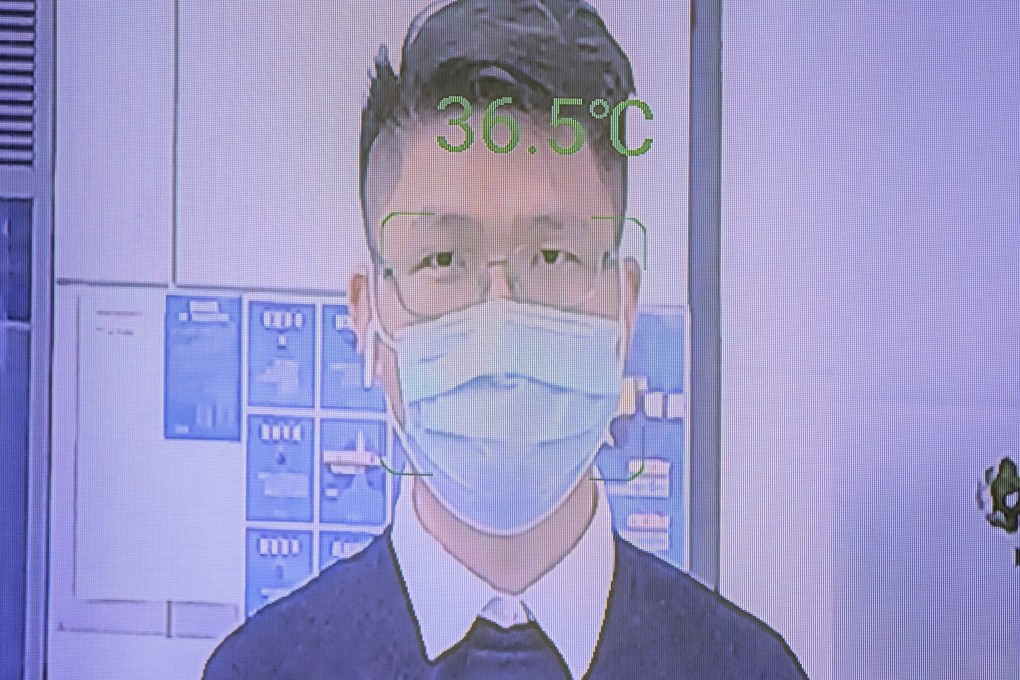

ExplainerWhat is facial recognition, and why is it more relevant than ever during the coronavirus pandemic?

- Facial recognition software has been increasingly deployed by countries to secure access and improve surveillance, especially during the pandemic

- But the technology is controversial, not just because data leaks are common, but also because of its potential to exacerbate racial or gender biases

Around the world, the artificial intelligence-based technology has been increasingly deployed by law enforcement and border control to secure access and improve surveillance.

Here’s a summary of what we know about facial recognition technology.

How does facial recognition work?

Facial recognition systems involve the identification of people from a database of images, including still photographs and video. Deep learning – a subset of artificial intelligence – speeds up a system’s face-scanning capabilities, as it learns more about the data it is processing. Such systems require vast amounts of information to become faster and more accurate.

Essentially, these systems generate a so-called “unique face print” for each subject by reading and measuring dozens to thousands of “nodal points”, including the distance between eyes, the width of a person’s nose and depth of the eye socket. With a network of surveillance cameras, recognition systems process a wider range of features, including subjects’ height, age and colour of clothes.