AlphaGo’s China showdown: why it’s time to embrace artificial intelligence

As Google DeepMind’s programme prepares for its match with Chinese Go grandmaster Ke Jie, is it time to sit back and let the computers take over?

To err is human. Yet it is a sign of how far computer programming has come that to err is also to be artificially intelligent.

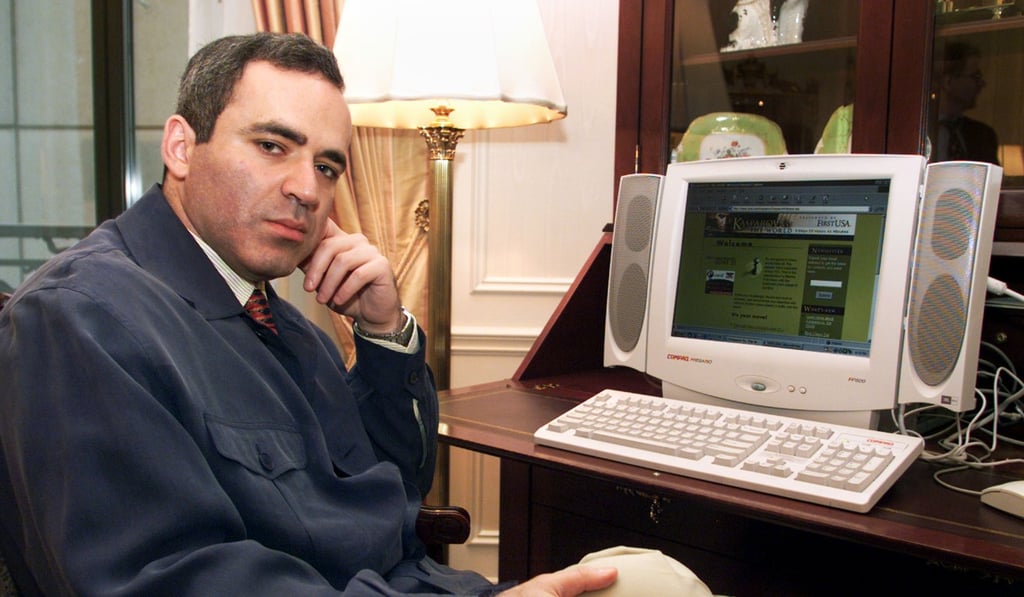

When IBM Deep Blue won its six-game chess match against Garry Kasparov in May 1997, marking the first defeat of a reigning world chess champion to a computer under tournament conditions, there was one particular moment that stood out in Kasparov’s mind.

As he observed in Time magazine: “I got my first glimpse of artificial intelligence, when in the first game of my match with Deep Blue, the computer nudged a pawn forward to a square where it could easily be captured. It was a wonderful and extremely human move... I had played a lot of computers but had never experienced anything like this. I could feel – I could smell – a new kind of intelligence across the table.”

In Nate Silver’s book The Signal and the Noise, IBM scientist Murray Campbell from the Deep Blue team revealed that the “extremely human move” in the game against Kasparov was actually a bug in the programme that was later fixed.

Nevertheless, for many like Kasparov, that moment of artificial erring has come to be seen as a pivotal moment in the development of artificial intelligence, like the proverbial genie leaving the bottle.

Fast forward 20 years from Kasparov’s defeat and computers are still battling human grandmasters, but now the battlefield has moved to the ancient Chinese board game of Go. Go is seen as a far more difficult game for computers to master because of its heavy reliance on intuition, strategic thinking and winning multiple battles across the board. A computer cannot simply memorise all combinations of board pieces, assess the situation, construct and execute a strategy to win, like in chess.